You seriously won’t believe how easy it’s become for people to scam food delivery apps these days. Some folks just whip up or tweak photos with AI—suddenly, that perfectly cooked burger looks raw or spoiled, and voilà, they demand a refund from DoorDash or whoever’s running the show.

I’ll walk you through how these fake photos actually fool the system, why apps usually hand out refunds with barely a glance, and what this wild trend means for restaurants, drivers, and the rest of us who just want dinner.

Let’s dig into the sneaky tricks scammers use, the fallout for the gig economy, and whether these platforms are even trying to keep up. I’ll also share a few tips for spotting doctored images and what both companies and regular people can do to slow this mess down.

How AI Is Used to Commit Fraud

Here’s how bad actors use generative tools to fake food problems, submit bogus refund claims, and sometimes even forge delivery proof. The whole scheme leans on platforms trusting customer photos and timestamps way too much.

Creating Fake Uncooked Food Photos with AI

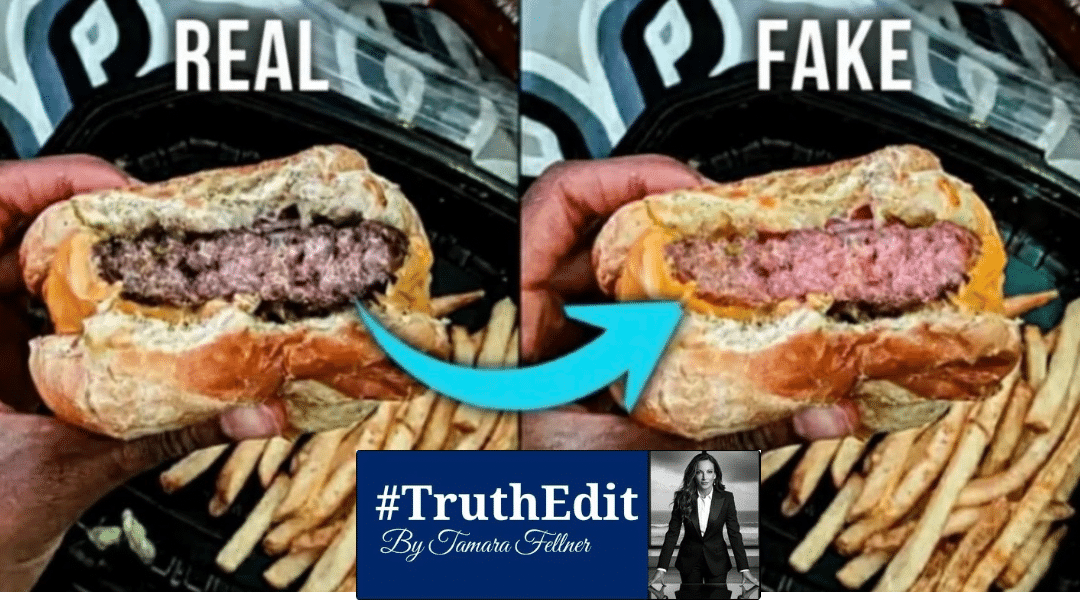

I’ve seen people fire up AI image generators and editors to mess with photos or create totally fake shots that make a cooked meal look raw, gross, or even contaminated. They’ll upload a real meal pic and prompt the AI to desaturate, slap on some pink tones, or mess with textures, or just tell it to spit out a “raw burger” from scratch.

It’s wild how specific prompts—like “undercooked beef, visible pink center, greasy bun”—get you scarily realistic results in seconds. These models even copy plate backgrounds, weird shadows, and condensation, which makes it a pain to spot fakes.

Then, users polish the images in regular photo editors, scrubbing out any obvious AI fingerprints and tossing in some phone-camera noise. That extra step helps dodge automated checks that look for signs an image isn’t legit.

Submitting AI-Generated Images for Refunds

Here’s the usual play: order food, snap (or fake) a “problem” photo, and send it to Delivery or Uber Eats support with a complaint. The support systems mostly just rely on photo evidence and often issue refunds automatically to keep things smooth and fast.

Scammers know this. They upload one convincing image, add a believable story—maybe the burger’s raw, maybe there’s a hair, whatever—and that’s often enough to trigger a refund. Sometimes they’ll toss in timestamps or delivery details to make it all look more real.

The whole trick works because platforms care more about happy customers than digging into every claim. Quick refunds mean fewer arguments, but it’s a goldmine for anyone using AI to fake complaints.

Expanding Methods: Drivers Using AI for Fake Deliveries

It’s not just customers, either. I’ve seen cases where drivers allegedly use AI to fake proof-of-delivery photos. Instead of snapping the actual drop-off, a driver might generate an image showing the food at the right address or a bag sitting by a door.

DoorDash even admitted to banning at least one driver over an AI-generated delivery photo, so they’re clearly watching. But some drivers keep trying, dodging geotags or making fake pics that look like the real delivery spot.

They’re gaming the system by targeting verification steps—photo, GPS, timestamp—so platforms have to check more signals, like app data, route history, or even a receiver’s confirmation, to catch the fakes.

Spread Across Delivery Platforms

This isn’t just a DoorDash headache. Uber Eats and other delivery services are dealing with the same thing, since they all rely on customer photos and want to resolve disputes fast. I’ve seen plenty of social posts where people admit to using AI-edited pics to get refunds on different platforms.

Each app has its own defenses—some use image analysis, others want more metadata—but AI keeps closing the gap between real and fake. As these tools get better, fraud can spread fast unless verification gets smarter.

Restaurants and drivers are the ones stuck with the bill when platforms give out refunds on fake claims. That’s pushed some companies to tighten their fraud rules and cut back on automatic refunds for single-image complaints.

Impacts, Detection, and Platform Responses

Let’s talk about how these photo refund scams hit restaurants and drivers, what platforms do to catch them, and how the public’s reaction is forcing companies to step up—or at least try.

Consequences for Restaurants and Drivers

Restaurants eat the cost when customers claim their meals arrived raw and get full refunds. Small places lose not just the food, but also the platform’s commission, and then there’s all the wasted time and ingredients. Margins are already tight, so this stuff really hurts.

Drivers get hit too. Platforms sometimes penalize them for disputes they didn’t cause. I’ve heard from Dashers and Uber Eats drivers who had orders canceled, accounts deactivated, or pay held up during investigations. For gig workers living paycheck to paycheck, that’s rough.

When fraud keeps happening, delivery fees go up and payout policies get stingier. Local spots sometimes get bad reviews or chargebacks tied to disputed orders, even when they did everything right.

Platforms’ Anti-Fraud Measures and Policy Changes

DoorDash and Uber Eats have rolled out more defenses: automated fraud models, manual reviews, and even partnerships with companies like Sift for payment security. They check device info, order history, image details, and look for suspicious refund patterns.

I’ve noticed some policy changes too—faster investigations, payout holds on disputed orders, and tougher penalties if you keep making claims. Sometimes they’ll ask for extra photos, timestamps, or in-app confirmations to double-check delivery issues.

I’m honestly wary about deepfakes and AI-powered scams. Experts like Byrne Hobart have pointed out that these tools keep getting better at faking images and messages, so platforms are trying out anomaly detection and checking image origins to spot synthetic media. I expect we’ll see more investment in machine learning that mixes behavior tracking with media forensics.

Role of Social Media and Public Reaction

Social media really cranks up the volume on both scams and anti-fraud efforts. Viral posts about refund tricks or “how-to” guides just explode everywhere.

Platforms scramble to keep up, tightening rules and yanking down those step-by-step scam tutorials. Honestly, it’s a bit of a cat-and-mouse game.

Public pressure? Oh, it’s intense. Folks push DoorDash and Uber Eats to drop transparency reports and launch customer-education campaigns.

Everyone wants fair and fast resolutions, but drivers and restaurants are also out there, demanding clearer protections when disputes pop up.

Media coverage and influencers kind of stir the pot, too. Sometimes, their spotlight speeds up platform changes, but yeah, it can also give copycats some fresh ideas.

Clear communication—like spelling out what evidence you need or how to report stuff—helps cut down on sketchy claims. It’s not perfect, but it does rebuild a little trust between merchants and drivers, which feels like a win.